|

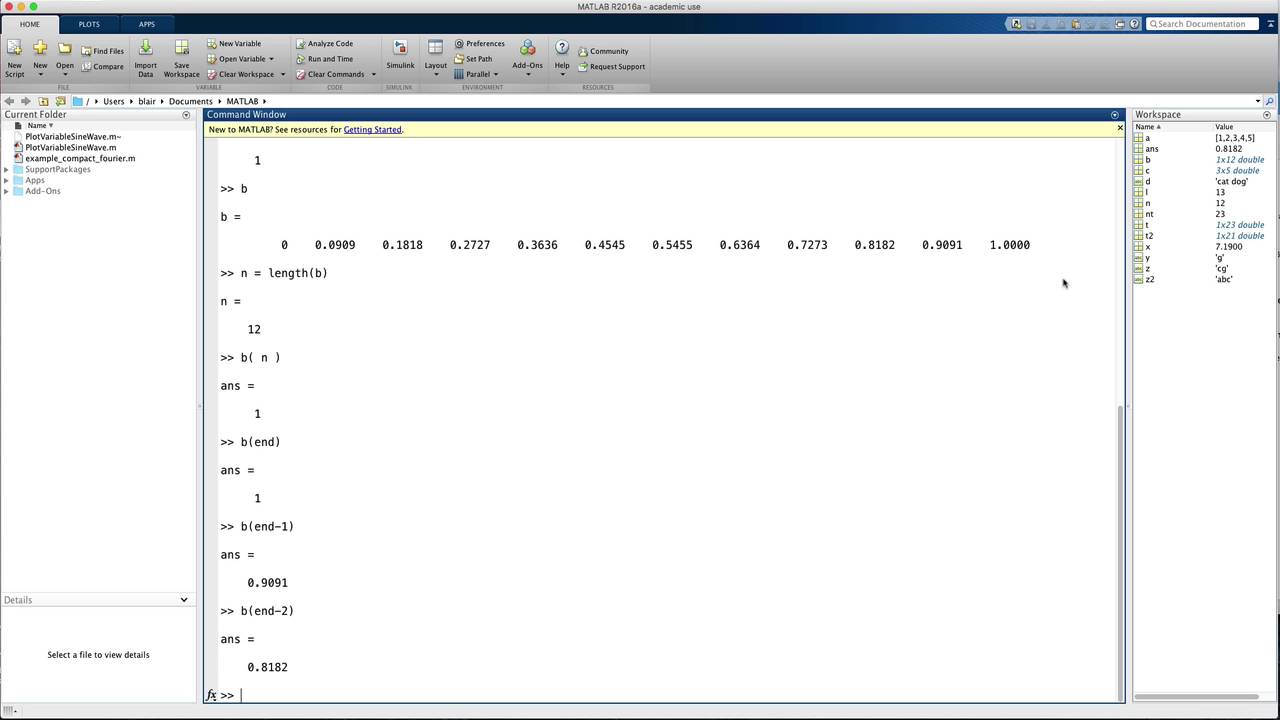

Wa_emtskilllevel: "emtskilllevel:beginner", Wa_emtsystemtype: "emtsystemtype:servers", Wa_primarycontenttagging: "primarycontenttagging:processors/intelxeonprocessors", Wa_emtprogramminglanguage: "emtprogramminglanguage:cc", The task of calculating CVA from these exposures occurs in several steps: netting, collateralisation, integration over paths, and integration over dates.Īlso a complete webinar (on quantifi's site) and associated slide-deck (PDF) By running a large number of these randomized simulated ‘paths’, we can estimate the distribution of forward values, giving both the expected and extreme ‘exposures.’ The simulation step results in a 3-dimensional array of exposures. This gives us a ‘path’ of projected values over the lifetime of each trade. The evolution of market prices over a series of forward dates is simulated, then the value of each derivative trade is calculated at that forward date using the simulated market prices. The most common general purpose approach to calculation of CVA is based on a Monte-Carlo simulation of the distribution of forward values for all derivative trades with a counterparty.

A particularly good example is the aggregation of Credit Value Adjustment (CVA) and other measures of counterparty risk. The Finance domain provides many good candidates for vectorization. This process leverages Intel tools to provide a clear path to transforming existing code into modern, high-performance software leveraging multicore and manycore processors.Īpplying Vectorization to CVA Aggregation The simplest way to implement vectorisation is to start with Intel’s 6-step process. They vary in terms of complexity, flexibility and future compatibility. There are a range of alternatives and tools for implementing vectorisation. Combine this with threading and multi-core CPUs leads to orders of magnitude performance gains. For example, a CPU with a 512 bit register could hold 16 32- bit single precision doubles and do a single calculation.ġ6 times faster than executing a single instruction at a time. Modern CPUs provide direct support for vector operations where a single instruction is applied to multiple data (SIMD). Vectorization is the process of converting an algorithm from operating on a single value at a time to operating on a set of values (vector) at one time. Modern software must leverage both Threading and Vectorisation to get the highest performance possible from the latest generation of processors. Multi-threading and vectorisation are each powerful tools on their own, but only by combining them can performance be maximised. Software design must adapt to take advantage of these new processor technologies.

SIMD provides a way to increase performance using less power.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed